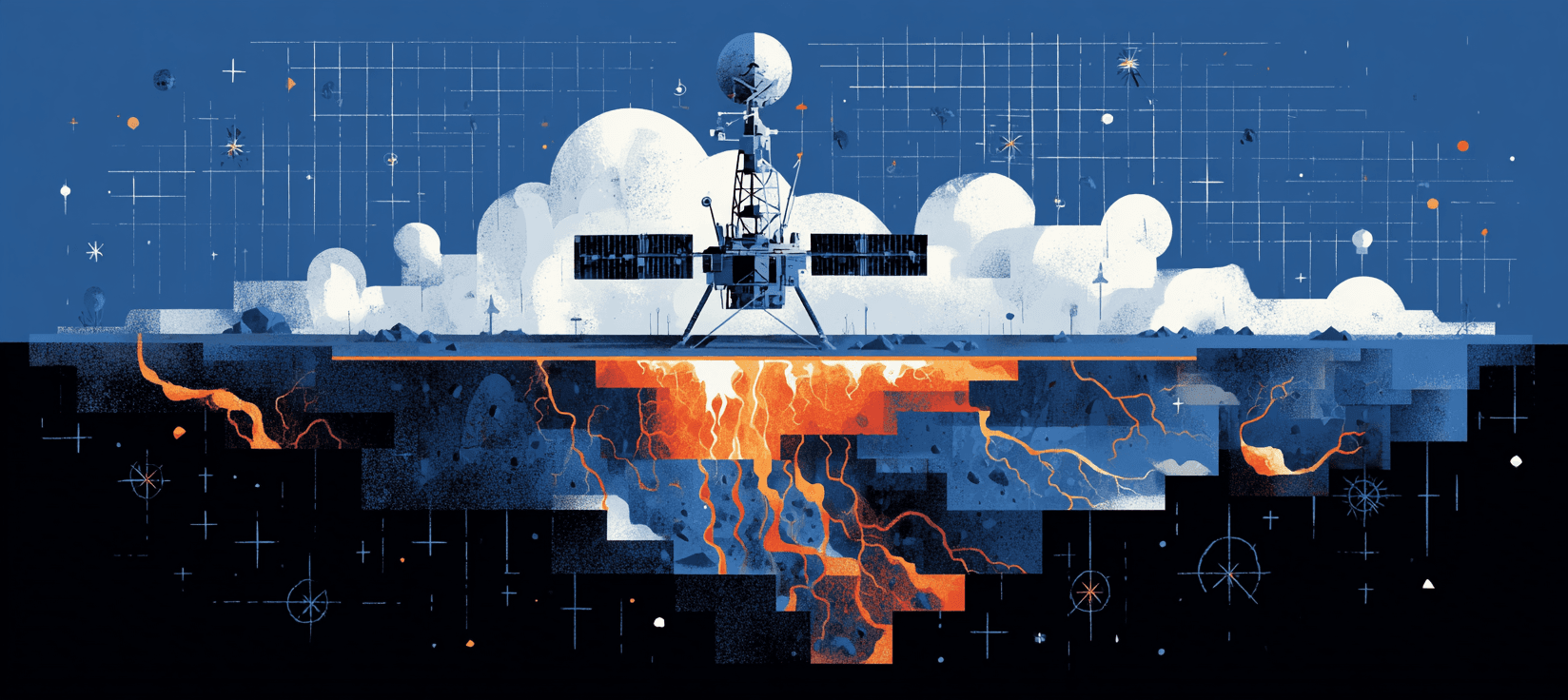

Every wildfire season in the Western US, families in fire-prone communities check air quality apps before deciding whether their kids can play outside. Local air districts approve or deny prescribed burns based on emissions models. County planners draw evacuation zones. All of it rests on satellite-derived data about what fire actually releases into the atmosphere.

In late February, a team from Lund University and UC Berkeley published findings showing that data has been dramatically wrong.

The Eckdahl et al. study, in Science Advances, measured what satellites missed in Swedish wildfires: underground peat combustion, smoldering slowly beneath the surface, invisible to every orbital sensor. Across Sweden, the satellites underestimated wildfire emissions by 50%. In Dalarna County, where researchers did ground-level verification, real emissions were fourteen times what the models showed.

Peat and deep organic soils burn at low intensity for weeks, producing enormous particulate matter and carbon but almost no thermal signature. Satellites were built to detect heat from above. What smolders below the surface doesn't register.

Where the Numbers Land

In California, every prescribed burn requires a smoke management plan approved through the Air Resources Board and local air quality districts. How much particulate matter, how far the smoke travels, which neighborhoods breathe it. The state treats roughly 125,000 acres annually with prescribed fire and is scaling up after Governor Newsom's October 2025 executive order to reduce permitting barriers. Oregon's smoke management program runs on the same logic, with the Department of Forestry issuing daily burn forecasts and fuel limits tied to emissions modeling.

These programs are the primary tool for reducing catastrophic wildfire in places like Jackson County, Oregon, or the Sierra Nevada foothills where prescribed burn windows are already narrow. Whether burns happen depends on emissions estimates. Those estimates flow from satellite-derived databases. And the blind spot was already partially documented before Eckdahl: research found that satellite approaches missed 54% of PM2.5 emissions from low-intensity and understory fires compared to ground-based inventories.

The measurement gap cuts both ways. A prescribed burn denied because modeled emissions were too high may have been compared against a wildfire baseline that was already wrong. If uncontrolled fire releases far more than satellites show, the case for prescribed burning actually gets stronger. But the prescribed burn's own emissions might also be underestimated, particularly the smoldering phase that produces the heaviest particulate loads closest to the ground where people breathe. A Northern California study found most prescribed burns conducted from 2017 to 2020 didn't even overlap with the highest smoke-risk areas. The data guiding where and whether to burn may be wrong in multiple directions simultaneously.

Research estimates roughly 24,000 annual US deaths from wildfire smoke exposure. That epidemiology depends on accurate exposure data. If the satellite-derived emissions feeding those exposure models have been systematically low, the health toll is likely understated too. The communities breathing the worst of it, closest to fire lines with the least capacity to monitor air quality or seal their homes against smoke, are the ones most invisible inside the gap.

Western Soil Burns Too

Eckdahl's team acknowledges that Western US forests lack the thick peat of Scandinavian boreal landscapes. But the Pacific Northwest holds forested wetlands classified as peatlands along the Oregon coast and Washington lowlands, with organic soils deep enough to sustain underground combustion. A wildfire in a southwestern Oregon Douglas-fir forest consumed 2.3 kg of carbon per square meter from the organic soil horizon alone. In 2011, deep organic fuel combustion in Minnesota and North Carolina produced some of the largest PM2.5 emissions recorded that year despite moderate burned area.

Through the Western Fire & Forest Collaborative, Eckdahl is now adapting these methods to lower-48 forests, studying soil bacteria and fungi and how they shape a forest's carbon dynamics after fire. The work is early, focused on soil microbiology rather than direct replication of the Swedish peat methodology. The researchers are careful to note that local climate, vegetation types, and soil characteristics all dramatically influence how much carbon a wildfire actually releases. How much Western soil burns underground, and how badly the satellites miss it, remains an open question. But the pattern from Sweden is clear enough to unsettle every number downstream.

A Season of Corrections

Eckdahl's finding lands in a stretch of months that keeps delivering the same shape of discovery. In November, a Nature study found the natural land carbon sink substantially smaller than estimated. In January, the updated Global Fire Emissions Database revised total fire carbon emissions upward by 70%. In February, scientists reported calcifying plankton largely missing from ocean climate models. In March, the PIK warming acceleration study confirmed the rate itself was faster than instruments had captured.

The same pattern, study after study: the tools built to measure climate change have been systematically understating it. The interventions designed from those tools are sized for a smaller problem than the one that exists. For Western fire communities, a corrected baseline reshapes the next decade of prescribed burn programs, the health studies that will drive smoke regulation, the risk calculus for insurers already retreating from fire-prone counties. All at once.

For families in Western fire country, none of this is abstract. The decisions that shape their safety, their air, their children's health were called data-driven. The data was missing what burned beneath the surface. The instruments saw what they could see. The ground was burning anyway.

People who live with wildfire smoke already knew something was off. The app said moderate. Their kids were coughing.

Things to follow up on...

- The unregulated death toll: A Science Advances study ties wildfire smoke to roughly 24,100 US deaths annually from PM2.5 exposure that remains unregulated by the EPA because wildfires are classified as natural disasters.

- Future smoke projections worsen: A PNAS study projects that under 3°C of warming, wildfire smoke exposure could drive 64,000 annual US deaths, a 60% increase over current estimates that may themselves be understated.

- Fire databases catch up: The newly released GFED5 revised global fire carbon emissions to 3.4 petagram per year, a 70% increase over its predecessor, partly by improving detection of small fires the satellites had been missing.

- Warming acceleration confirmed: PIK researchers statistically confirmed for the first time that global warming has accelerated since 2015, with the planet potentially breaching 1.5°C before 2030, compressing the timeline for every fire-management decision built on slower assumptions.